Preventing Safety Hazards in Utilizing Open-source AI Agent

"Have you 'raised lobsters'?" has become the "social currency" in the AI circle. Cities like Hefei, Anhui province and Shenzhen, Guangdong province have issued policies to support "raising lobsters," and some major Internet companies have also announced that their products would be integrated with "lobsters."

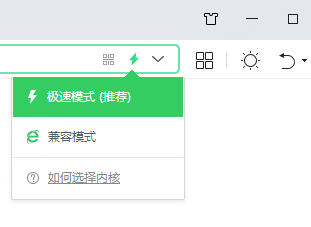

This "lobster" is not a delicacy on the dining table, but an open-source AI agent named OpenClaw, named because its icon is inspired by a lobster. OpenClaw is an autonomous AI agent that can work independently. It breaks through the limitations of traditional chatbots that "only talk but do nothing." Like a 24-hour online "cyber worker," it can read and write files, control browsers, and even autonomously complete complex tasks such as writing and planning, truly achieving "You give the order, he carries it out."

It is worth noting that behind the bustling "lobsters' farming boom" lie multiple risks. As Qihoo 360's founder Zhou Hongyi said, "The 'OpenClaw' industry is still in its early stages, and configuring it is an extremely difficult task for common people."

Some users have reported that their important data was mistakenly deleted by OpenClaw, and others have suffered privacy leaks due to OpenClaw having excessive permissions. Criminals even disguise "skill packs" to implant malicious plugins, turning "raising lobsters" into a situation where "wolves are invited into the house."

Hu Xia, leading scientist of Shanghai Artificial Intelligence Laboratory, said, "If 'lobster' represents a sharp weapon in users' hands, then at present, this knife has no sheath."

Specifically, "raising lobsters" mainly faces three types of risks. The installation and usage of OpenClaw has a high threshold. OpenClaw emphasizes "local priority," requiring complex environment configuration and model integration. Even those with programming skills often find it difficult.

Moreover, the cost of "raising lobsters" is not low. Although OpenClaw is free, its local operation requires computing power, and cloud leasing incurs fees. Using practical plugins often requires payment, and some users spend as much as several hundred RMB per day.

The most critical issue to be guarded against is security risk. Recently, the National Vulnerability Database of the Ministry of Industry and Information Technology, as well as The National Computer Network Emergency Response Technical Team/Coordination Center of China, have issued a series of notices highlighting the potential security hazards associated with "raising lobsters."

Experts noted that the greatest risk brought by autonomous intelligent agents like OpenClaw lies not in code errors, but in granting AI excessive "system proxy authority." This could lead to uncontrolled micro-behaviors, the formation of invisible communication among agents, and new challenges to the macro defense line.

He Xiangnan, the vice dean of the School of Artificial Intelligence and Data Science at the University of Science and Technology of China, said, "It is urgent to conduct in-depth research on the formation and evolution mechanisms of self-evolving intelligent agents and their networks, and accelerate the construction of an integrated system of 'internal security - defense reinforcement' to prevent problems before they occur."

In Shanghai, some research institutions have taken action. The Shanghai Artificial Intelligence Laboratory has issued a white paper on systematic risk identification, meanwhile, an intelligent agent defense model capable of quickly diagnosing risks was also made available for free, exploring the "internal evolution" governance framework that embeds safety guidelines within the decision-making layer of intelligent agents.

"The aim of these efforts is to deeply integrate security capabilities into the entire chain of AI development, providing a systematic solution for 'internal security' in the era of intelligent agents," Hu said.